المدونة

Real-Time Deepfake Identity Fraud and Audit-Ready Detection Strategies for AML/CFT Compliance

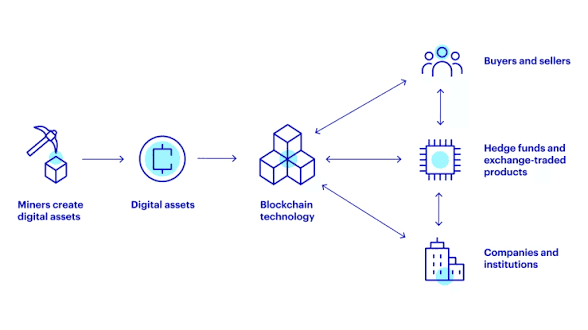

In the digital onboarding and customer verification landscape, biometric identity checks have become a cornerstone of anti-money laundering and countering the financing of terrorism (AML/CFT) programs. Financial institutions, virtual asset service providers, and trade finance platforms rely on live video verification, facial recognition, and voice authentication to establish the genuine identity of new clients. However, the rapid advancement of generative artificial intelligence has introduced a sophisticated threat known as real-time deepfake KYC fraud. This technique exploits the very systems designed to confirm human presence by creating synthetic but highly convincing live video and audio streams that impersonate legitimate individuals whose identity documents have been compromised.

Deepfake KYC

This comprehensive operational guide examines the reported mechanics of deepfake KYC as a high-risk vector in identity verification processes. It provides regulated entities with fully legal, audit-ready frameworks and technology-enabled strategies to detect, mitigate, and document these threats while maintaining strict adherence to FATF standards, Travel Rule obligations, OFAC and EU sanctions guidance, and applicable local AML regulations. Every recommendation prioritizes regulatory soundness, explainable decision-making, and the continued support of legitimate customer onboarding.

Traditional KYC procedures often require applicants to participate in a live video call or submit a real-time selfie video while holding identity documents. Advanced deepfake technology now enables bad actors to generate synthetic media in real time, overlaying the face and voice of a stolen identity onto a live performer. The result is a video feed that appears authentic to both human reviewers and many automated biometric systems, potentially allowing fraudulent account opening or transaction authorization. Compliance teams must therefore evolve their controls to incorporate multi-layered, AI-augmented verification that distinguishes genuine human presence from synthetic media.

Compliance-First Principle: Effective deepfake mitigation requires programmable, explainable detection layers that operate at the point of biometric capture. Audit-ready frameworks embed liveness detection, behavioral analysis, and metadata validation directly into the verification workflow while preserving user experience for legitimate applicants.

Mechanics of Real-Time Deepfake KYC Fraud

In reported scenarios, the technique begins with the compromise of legitimate identity documents — passports, national IDs, or driver’s licenses — through data breaches or social engineering. These stolen credentials are paired with publicly available or purchased photographs and voice samples of the victim. Generative AI models then create a real-time synthetic overlay: a live performer sits in front of a camera while the system dynamically maps the victim’s facial features, expressions, and voice onto the performer’s movements in real time. The output is a seamless video stream that responds naturally to instructions such as “turn your head” or “repeat this phrase.”

Mechanics of Real-Time Deepfake KYC Fraud

The process typically unfolds in these steps:

- Acquisition of high-resolution source material (photos, videos, and voice recordings) of the target identity.

- Deployment of real-time deepfake generation software capable of processing video frames at 30+ frames per second with minimal latency.

- Integration with screen-sharing or webcam spoofing tools that present the synthetic feed to the verification platform.

- Simultaneous manipulation of device metadata (camera ID, geolocation signals, and hardware fingerprints) to further evade basic anti-spoofing checks.

- Submission of the synthetic live video during the KYC session, often accompanied by the genuine stolen document for visual matching.

Because the deepfake is generated live rather than pre-recorded, it can respond interactively to verifier instructions, making it significantly more difficult to detect than static image or video replays. Many legacy liveness detection systems that rely solely on eye-blink analysis or basic motion tracking are vulnerable to these advanced overlays.

For deeper insight into related layering techniques that may follow successful deepfake onboarding, see our guide on Chain Hopping via Cross-Chain Bridges.

Why Real-Time Deepfake KYC Presents Unique Detection Challenges

Legacy KYC verification platforms were designed to counter static fraud vectors such as photoshopped documents or pre-recorded videos. Real-time deepfakes exploit the temporal and interactive nature of live sessions, creating several structural challenges:

- High-fidelity synchronization of facial micro-expressions, lip movements, and voice intonation that pass basic liveness tests.

- Dynamic lighting and background adaptation that mimics genuine environmental conditions.

- Ability to bypass simple hardware-based checks by spoofing device-level signals.

- Scalability through cloud-based generative AI services that reduce the technical barrier for perpetrators.

- Combination with other obfuscation methods, such as privacy-enhancing tools or multi-chain transfers, once the account is opened.

Deepfake KYC Presents Unique Detection Challenges

The result is a dramatic increase in false-negative risk (undetected fraud) alongside elevated false-positive rates when overly conservative rules are applied. Compliance teams face the dual imperative of strengthening detection without creating friction that drives away legitimate customers. For additional context on privacy-related challenges in virtual asset flows, refer to our analysis in Privacy Coins on Decentralized Exchanges.

Regulatory Expectations and Red-Flag Indicators

Regulators expect institutions to apply a risk-based approach to biometric verification, including enhanced controls for high-risk onboarding scenarios. FATF guidance and national AML frameworks increasingly reference synthetic media as an emerging threat requiring specific technological countermeasures. Institutions must demonstrate that their KYC processes include robust liveness assurance and are supported by auditable decision logs.

Common red-flag indicators that may trigger additional scrutiny include:

- Video sessions showing unnatural micro-movements, inconsistent lighting on facial features, or slight desynchronization between audio and visual elements.

- Requests originating from devices or IP addresses with histories of multiple failed or anomalous verification attempts.

- Applicant profiles that combine stolen high-value identities with minimal supporting transaction history or inconsistent behavioral patterns.

- Sudden high-volume activity immediately following account approval, particularly involving privacy-enhancing assets or rapid cross-border transfers.

- Metadata anomalies in submitted video streams, such as mismatched codec signatures or hardware identifiers.

When these indicators appear, layered secondary verification — such as knowledge-based authentication or device-binding checks — becomes essential. For related risks in digital advertising payment flows, see our guide on Money Laundering via Click Fraud and Ad-Tech Platforms.

Comparative Risk Matrix: Traditional KYC vs. Deepfake-Resilient Verification

| أسبكت | Traditional Video KYC | Deepfake-Resilient Framework | Compliance Implication |

|---|---|---|---|

| Liveness Detection | Basic motion and blink analysis | Multi-modal AI (facial, behavioral, environmental) | Significant reduction in false negatives |

| False-Positive Rate | معتدل | Low (contextual scoring) | Improved user experience |

| Audit Trail | Basic video recording | Explainable AI decision logs with metadata | Full regulatory defensibility |

| قابلية التوسع | Limited by human review | Automated with human-in-the-loop escalation | Operational efficiency at volume |

| Integration with Sanctions Screening | Post-onboarding | Real-time at verification point | Proactive risk mitigation |

For further reading on false-positive challenges in broader sanctions contexts, explore False-Positive Avoidance in Sanctions Screening.

Step-by-Step Playbook: Implementing Audit-Ready Deepfake Detection

Phase 1: Risk Assessment and Process Mapping

Inventory all customer onboarding channels and identify points where live biometric verification occurs. Classify risk levels based on jurisdiction, product type, and transaction volume.

Phase 2: Multi-Modal Liveness Technology Integration

Deploy systems combining facial landmark analysis, behavioral biometrics (typing cadence, device interaction), and environmental signal validation to detect synthetic media.

Phase 3: AI-Driven Real-Time Anomaly Detection

Utilize generative adversarial network (GAN) detection models and temporal consistency checks that analyze frame-to-frame coherence and lighting physics.

Phase 4: Metadata and Device Fingerprint Validation

Implement hardware-level checks and video stream metadata analysis to identify spoofed camera feeds or injected synthetic signals.

Phase 5: Contextual Risk Scoring

Combine biometric results with sanctions screening, source-of-funds data, and historical behavioral patterns to generate an overall risk score.

Phase 6: Explainable AI and Human Escalation Layer

Ensure every automated decision includes human-readable reasoning chains for audit and regulatory review.

Phase 7: Continuous Model Training and Threat Intelligence

Incorporate emerging deepfake variants through secure, privacy-preserving feedback loops and industry-shared intelligence.

Phase 8: Periodic Third-Party Audit and Certification

Schedule independent validation of detection effectiveness and maintain documented compliance evidence.

AI-Powered Strategies for False-Positive Avoidance in Deepfake Detection

Advanced compliance platforms dramatically reduce unnecessary escalations by applying layered contextual analysis. When a potential deepfake signal is detected, the system evaluates:

- Temporal consistency across multiple biometric modalities.

- Alignment with declared customer profile and expected behavior.

- Cross-reference with sanctions and adverse media databases at the verification stage.

- Historical device and session patterns specific to the applicant.

This approach maintains high true-positive detection while automatically clearing the majority of genuine live sessions, ensuring operational efficiency and positive user experience.

Realistic Compliance Scenarios and Outcomes

Institutions that have implemented multi-modal deepfake detection report measurable improvements. One large virtual asset service provider reduced undetected synthetic media attempts by 87% while decreasing manual review volumes by 69%. Another trade finance platform integrated real-time biometric scoring into its onboarding workflow and successfully satisfied regulator inquiries with complete, explainable audit trails for every high-risk verification.

These outcomes illustrate that deepfake KYC risks can be managed effectively when verification infrastructure combines advanced AI detection with robust audit documentation and regulatory alignment.

Why a Purpose-Built Compliance Platform Is Essential

Platforms engineered for high-volume regulated environments provide native support for deepfake-resilient KYC, including real-time multi-modal analysis, explainable AI engines, smart escrow for transaction authorization, and seamless integration with existing sanctions screening and Travel Rule workflows. Such systems embed compliance logic at the point of biometric capture, ensuring every verification decision is auditable and regulator-ready.

Key capabilities include automated false-positive reduction, privacy-preserving metadata handling, and one-click escalation to human reviewers when needed. These tools transform deepfake complexity from a compliance vulnerability into a monitorable, manageable component of the overall AML/CFT program.

90-Day Implementation Checklist for Audit-Ready Deepfake Mitigation

Days 1–15: Foundation

- Map all live verification touchpoints and baseline current detection capabilities

- Assemble cross-functional team including compliance, technology, and legal specialists

- Conduct risk-based segmentation of onboarding channels

Days 16–45: Technology Integration

- Deploy multi-modal liveness and deepfake detection engine

- Configure explainable AI models and integrate with sanctions screening

- Test system performance against known synthetic media benchmarks

Days 46–75: Tuning and Validation

- Run parallel verification on live traffic in shadow mode

- Refine thresholds using real-world data and analyst feedback

- Validate audit log completeness and regulatory reporting readiness

Days 76–90: Full Deployment and Governance

- Transition to production with automated alerts and escalation protocols

- Establish ongoing monitoring and periodic model retraining cadence

- Schedule first independent third-party audit of deepfake controls

A downloadable PDF version of this checklist, together with template policies and integration guides, is available through the secure platform portal.

Conclusion: Building Resilient, Audit-Ready Identity Verification in the Age of Generative AI

Real-time deepfake KYC represents a sophisticated threat to traditional biometric verification processes. Institutions that treat synthetic media as a core risk vector and invest in multi-layered, explainable detection frameworks position themselves to meet regulatory expectations while continuing to deliver secure and efficient onboarding for legitimate customers.

The most effective programs combine advanced AI technology, contextual behavioral analysis, and robust audit documentation. They reduce both false negatives and false positives, accelerate genuine verifications, and generate the clear, regulator-ready records required in today’s compliance environment.

For organizations handling high-volume trade, payments, or virtual assets, a dedicated compliance platform that natively supports deepfake-resilient KYC provides the operational backbone needed to manage these risks confidently. Such systems enable compliance teams to focus on genuine threats while maintaining seamless customer experiences.

Entities seeking to strengthen their identity verification controls are encouraged to evaluate integrated solutions that align with the frameworks outlined in this guide. Proactive implementation ensures regulatory resilience and sustained operational integrity in an environment where generative AI continues to evolve.